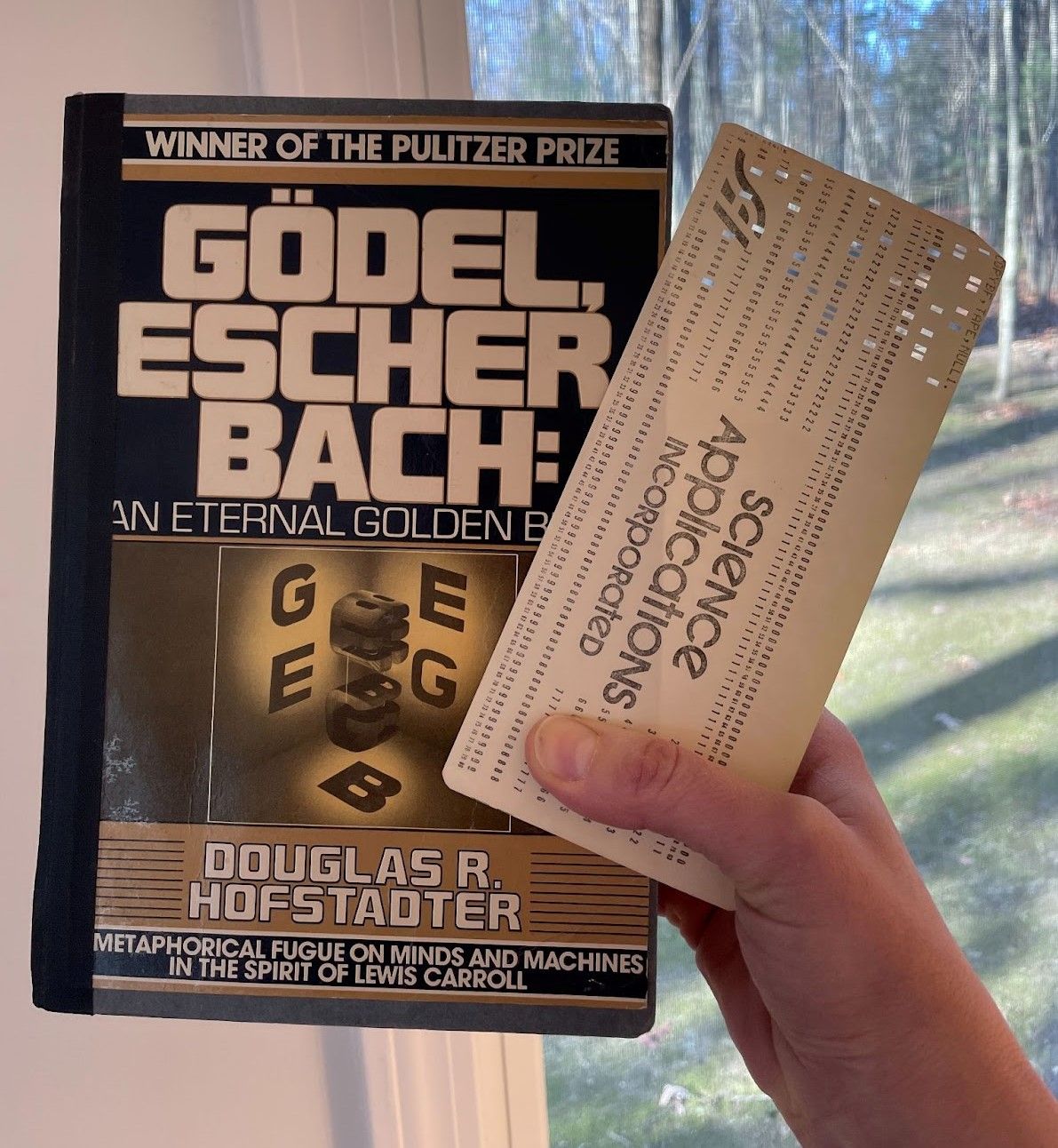

As a teenager, I discovered a worn copy of the book Gödel, Escher, Bach: An Eternal Golden Braid by Douglas Hofstadter on a bookshelf at home. It still had a computer punch card in it that my Mom had used as a bookmark, back when she briefly worked as a programmer in the early 1980s. Reading that book was like falling into another world. I found myself thinking about the mind and computers in brand new ways. I learned about Alan Turing’s work for the first time. I wondered, “Will computers ever think?” and “What is thinking, anyways?” I haven’t stopped wondering about these questions since.

Though I considered pursuing a career in computer science or cognitive science, I wound up taking a different path. I’m a science journalist who also writes books and articles that introduce scientific concepts to kids and teens. Though I’ve written about everything from outer space to dinosaurs, the topics I gravitate towards most often are computers, robots, and artificial intelligence.

For example, my newest book for kids and teens, Welcome to the Future: Robot Friends, Fusion Energy, Pet Dinosaurs and More, explores how ten different technologies could change the world in the future, starting with robot servants and ending with superintelligence. My job when I begin to write a new article or book, including this one, is to work towards understanding the topic as completely as I can. I care very much about giving kids accurate information. With very technical topics, such as artificial neural networks, grasping the concepts poses a huge challenge! On several occasions throughout my career, I’ve realized that I’d been thinking about things all wrong.

Credit: Marcin Wolski

I’d like to share some of the new understandings I’ve come to about AI and cognitive science along the way, as well as what changed my mind or shifted my perspective. I hope these pointers help when you are communicating about AI to those who aren’t experts.

Table of Contents

1. Artificial neural networks are not brains

For a long time, I happily compared ANNs to the human brain when trying to explain them to readers. I thought that artificial neurons and biological neurons had more in common than they do. This led to a misconception that if we had ANNs as large as a human brain, they would mimic its abilities. Now I know that this is incorrect.

I have learned that brains and ANNs function totally differently. The most I say now is that ANNs were inspired by the brain. Both involve very complex networks of billions of small bits (firing neurons in the brain; parameters in an ANN) interacting to accomplish a goal. But that seems to be where the similarity ends.

My “Ah-ha!” moment in this area happened when I read the article, A Brief History of Neural Nets and Deep Learning, by Andrey Kurenkov, a PhD student at the Stanford Vision and Learning Lab. I learned that an ANN is basically a statistical technique that learns patterns from a bunch of training data, and can then apply these patterns to data it has not seen during training. As long as new data is similar to what it saw during training this can work well, but the fact that ANNs are essentially doing pattern matching does limit their ability to do human-like reasoning.

In contrast, humans regularly make analogies, combine ideas, and figure things out without this sort of training. For example, a toddler seeing a giraffe for the very first time can infer that it eats and drinks, that it has spots on the side of its body she can’t see, that it won’t walk through a wall or disappear, and so much more. No one knows how to make computers perform these sorts of higher-level reasoning, or at least not nearly as well as humans.

When we have orders of magnitude more data and more computing power, will this be possible? Perhaps. I interviewed Douglas Hofstadter back in 2016, and he told me, “I hope that brute force won’t crack open the secrets of mind, but I don’t know.” Brute force has already been able to do so much more than early computer scientists thought, so I think that it has the potential to get us there. But I also think the ANN approach alone likely won’t be enough.

2. Brains are not blank slates

Seeing, grasping, navigating – all of these things seem easy to humans, but have been extraordinarily difficult to program into machines. I used to think that the reason was simple: people spent many years as babies and small children practicing these tasks. If a robot or computer practiced for the same amount of time, it seemed to me that it should be able to get just as good at the task.

Nope. That’s not the case.

One very cool reason for this is that the human species and all the species we evolved from have been practicing these tasks for millions of years. Evolution managed to produce bodies and brains that can see, hear, grasp, navigate, and so much more with very little practice required. Researchers have found that many newborn animals are born with some common sense concepts already in place. For example, newborn chicks know that an object that moves out of view still exists. I got many of these ideas from a podcast interview with Liz Spelke, a psychologist at Harvard.

So, one reason brains still beat machines and many types of tasks is that they don’t start from scratch when they are learning. Certain senses and skills are either innate or instinctual.

3. It should be possible to build a mind in a machine

Though brains have some clear evolutionary advantages over machines, it should still be possible to give a machine a mind. I once believed that this would be impossible, because I thought there was something “special” about the human mind – some spirit or soul that could never inhabit a computer program. I was raised religious, so that certainly had something to do with my attitude.

Over time, as I gradually gave up my attachment to religion and devoured books about science, I realized that everything about who I am (including my sense of self) could arise from the complex interactions of neurons in my brain. I am my brain. And if I am my brain, then an artificial brain could, by necessity, gain some sort of consciousness or self. Just because a computer is made out of silicon instead of cells shouldn’t prevent it from becoming a thinking being. (Of course, it may be a very alien thinking being that is difficult for humans to relate to.)

One of the books I read was another of Hofstadter’s, I am a Strange Loop. In this book, he points out that humans start out as infants that gradually gain conscious thought and intelligence as they grow up. In addition, many animals exhibit very intelligent behavior or show signs of having a sense of self. There seems to be a continuum of thought and intelligence (and most likely also consciousness) from the most basic of brains to the most complex. And that continuum could someday include artificial brains.

4. Superintelligence is not inevitable

This is something I only realized last year. When I read Nick Bostrom’s book Superintelligence, I was convinced that it was only a matter of time before AI would begin improving itself until it could out-think and outsmart humanity. Superintelligence seemed inevitable, though I thought it would likely take hundreds of years or more before it would arrive.

But then I spoke with Melanie Mitchell, a computer scientist at the Santa Fe Institute, and read her book, Artificial Intelligence: A Guide for Thinking Humans. She pointed out that we don’t even know if it’s possible for superintelligence to exist. “I don’t think it’s obvious at all that we could have general intelligence of the kind we humans have evolved without the kinds of limitations we have,” she told me. Those limitations include getting bored and distracted or having to care for our bodily needs. We don’t know yet if all the qualities we love most about computers can co-exist in one machine (or mind) with the qualities we love most about human brains.

5. Deep learning is not deep understanding

The name “deep learning” is an unfortunate one because it makes people think that computers are learning things deeply – as in, understanding things. I always explain that “deep” refers to the literal “depth” of the layers in the network, and NOT to any depth of understanding. What a deep learning model is actually doing is matching patterns.

This misconception is going to be a very tough one to overcome.

6. AI can be creative

AI doesn’t require deep understanding to do amazing things. I once did a school project about AI in which I claimed that computers would never be able to be creative. I was wrong. Now we have AI generating music, art, stories, code, science experiments, product designs, and so much more.

Earlier this year, I spent way too much time playing with a demo of Dall-E, an offshoot of Open AI’s GPT-3 that draws images based on text input. This model can combine concepts to illustrate new ones it hasn’t encountered before, such as an elephant dragon or a kangaroo made of carrots. I had to edit a sentence in my book that said AI couldn’t combine ideas in this way. Now it can.

You might hesitate to call the AI itself “creative” – humans still control its input, tweak its process, and choose its best output. The AI is still more like a tool than an artist or thinker. But it’s a very different type of tool than any that has been available up until now. This type of AI is certainly boosting human creativity and adding to the amount and type of art in the world.

This type of creativity, I believe, is one of the greatest things AI has to offer. Human thought has expanded to include machine thought. And because machine thought works in such an alien way and has such different advantages, humans are now able to accomplish things that are impossible to accomplish with brains alone. When I interviewed Gary Marcus, author of Rebooting AI: Building Artificial Intelligence We Can Trust, he said, “I think that at some point AI will fundamentally transform science and technology and medicine. It’s going to be phenomenal.”

7. People fear AI for the wrong reasons

People who don’t know how AI works are often willing to attribute much more agency and personality to machines and especially to robots than they really deserve.

Most stories in which AI goes wrong depict the technology destroying humanity in some manner. Robots enslave or control humans, or a computer exterminates humanity because it has decided the world would be better off without people in it, or a computer system blindly following instructions turns the entire universe into paperclips. These are all very interesting thought experiments, but none of them are going to happen any time soon, if ever. As I wrote in my book Welcome to the Future, Watson may have defeated humans at Jeopardy!, but, “Watson can’t turn against humans and become an overlord any more than a toaster could suddenly decide to freeze bread instead of heating it.”

That said, AI has many very scary applications – not because the AI may decide to do anything evil, but because people may decide to do evil things with AI. AI makes it easier to build autonomous weapons, systems of authoritarian control, and more. We should fear and seek to stop people who may use AI for these purposes. But we should not fear the technology itself. We should seek to understand it as best we can so we can use it to make the world a better place.

Where do we go from here?

These are the most important ideas about AI that I’ve gained in my career so far. But I’m ready and waiting for surprises that expand my knowledge -- or prove me wrong all over again.

Bio

Kathryn Hulick is the author of Welcome to the Future: Robot Friends, Fusion Energy, Pet Dinosaurs and More (illustrated by Marcin Wolski, published by Quarto, 2021). This book for kids and teens explores ten different technologies that could change the world in the future. The book challenges readers to think about the ethics of each technology – how can we use it to benefit all of humanity? As a freelance science journalist, she regularly contributes to Muse magazine, Front Vision, and Science News for Students. Here are two articles she’s written for kids about AI:

Easy for you, tough for a robot - Engineers are trying to reduce robots’ clumsiness — and boost their common sense

Teaching robots right from wrong - Scientists and philosophers team up to help robots make good choices

Hulick lives in Massachusetts with her husband, son and dog. In addition to writing and reading, she enjoys hiking, painting, and caring for her many house plants. Her website is kathrynhulick.com You can follow her on Twitter @khulick or on Instagram or TikTok @kathryn_hulick

My Favorite AI and Cognitive Science Resources for a General Audience

The website Science News for Students covers all kinds of science, including AI, in a manner that is fun and accessible for teens: https://www.sciencenewsforstudents.org/topic/computing

Lex Fridman. “Lex Fridman Podcast.” https://lexfridman.com/podcast/

Ayanna Howard. Sex, Race, and Robots: How to Be Human in the Age of AI. Audible Originals, 2020.

Gary Marcus and Ernest Davis. Rebooting AI: Building Artificial Intelligence We Can Trust. Pantheon Books, 2019.

Melanie Mitchell. Artificial Intelligence: A Guide for Thinking Humans. Pelican Books, 2019.

Max Tegmark. Life 3.0: Being Human in the Age of Artificial Intelligence. Deckle Edge, 2017.

Nick Bostrom. Superintelligence: Paths, Dangers, Strategies. Oxford University Press, 2014.

Douglas Hofstadter. I Am a Strange Loop. Basic Books, 2007.

Douglas Hofstadter. Godel, Escher, Bach: An Eternal Golden Braid. Basic Books, 1979.