Artificial Intelligence (AI) systems have been involved in numerous scandals in recent years. For instance, take the COMPAS recidivism algorithm. The algorithm evaluated the likelihood that defendants will commit another crime in the future. It was widely used in the US criminal justice system to inform decisions about who can be set free at all stages of the process. In 2016, ProPublica exposed that COMPAS’s predictions were biased: its mistakes favored white over black defendants. Black defendants were twice as likely to be labeled as high risk to reoffend but not actually reoffend. White defendants were 1.67 more likely to be labeled as low risk to reoffend but go on to reoffend. Examples of AI systems that are concerning for similar and other reasons abound. What can we do to mitigate the potential adverse effects of AI systems and harness their power to create positive impacts?

A reasonable first step is to articulate the harms we wish to avoid and the positive impacts we hope to attain. Many organizations engage in such activities, and the typical result is a set of principles, often called “AI ethics principles”. For example, Google states that it believes that AI systems should: (1) Be socially beneficial; (2) Avoid creating or reinforcing unfair bias; (3) Be built and tested for safety; (4) Be accountable to people; (5) Incorporate privacy design principles; (6) Uphold high standards of scientific excellence; and (7) Be made available for uses that accord with these principles.

There are now dozens or hundreds of different sets of AI ethics principles out there, written by governments, inter-governmental organizations, corporations, non-profits, and academics, including the The US Department of Defense, Australia, China, The European Commission, UNESCO, Google, Microsoft, Meta, and many others.

The flood of AI ethics principles has attracted critical attention. On their own, AI ethics principles are insufficient to improve AI systems. The principles must be operationalized and integrated into organizations’ workflows. Yet, as studies like those led by Jacqui Ayling and Jessica Morley show, the proliferation of AI ethics principles has not been accompanied by substantial development of tools to implement them, and the tools that are developed are limited.

It is tempting to respond to the present state in AI ethics by abandoning searches for principles. Given that there are so many principles out there already and so few tools to operationalize them, organizations might be inclined to simply use some of the existing principles and focus their attention on operationalization.

However, a difficult question to answer is which principles to use. How do we know that organizations will choose well? What is to prevent them from cherry picking, for example? One way to go is to sift through the existing literature, looking for universal AI ethics principles. The hope might be that if we find universal principles, they could guide the development and evaluation of AI systems everywhere. Organizations that develop AI systems could focus on operationalizing them. Those who evaluate AI systems, such as investors, regulators, auditors, and consumers, could examine AI systems based on these principles.

I advise against this approach. In this article, I explain why it is unlikely that universal AI ethics principles will be found and I discuss reasons to avoid using dominant trends as default. Instead, I suggest that each organization should articulate its own AI ethics principles, and I sketch ways to do so responsibly.

The search for universal AI ethics principles

In recent years, several research groups have sought unifying themes in current AI ethics principles. They all found unifying themes, but not the same ones. For example, a study led by Anna Jobin manually coded 84 AI ethics documents. The team came up with 11 unifying themes: transparency (appeared in 87% of the documents), justice and fairness (81%), non-maleficence (71%), responsibility (71%), privacy (56%), beneficence (49%), freedom and autonomy (40%), Trust (33%), sustainability (17%), dignity (15%), and solidarity (7%). In another study, Luciano Floridi and Josh Cowls examined six influential AI ethics documents. They checked whether the four ethical principles that are prevalent in bioethics -- beneficence, non-maleficence, autonomy, and justice -- are also prevalent in the AI ethics documents. They found that they are, and added to these a fifth prevalent principle: explicability. Other studies, such as those led by Yi Zeng, Jessica Fjeld, and Thilo Hagendorff, find other sets of unifying themes.

The fact that different studies find different unifying themes is unsurprising given that they analyze different sets of principles and use different methodologies. The different methodologies are not easily comparable and none of them seems clearly superior. Therefore, we find ourselves with yet another multiplicity. Now we have multiple unifying themes.

What shall we do in the face of the competing sets of unifying themes in AI ethics principles? One way to go is to look for commonalities between them. Are there unifying themes among the unifying themes? The likely answer is “No”. Different groups of researchers would probably find different, equally legitimate, “unifying unifying themes”.

Moreover, unifying themes are a far cry from principles. Identifying “privacy” or “fairness” as unifying themes doesn’t tell us what the principles should say exactly or why they are important. In fact, as studies like Fjeld’s and Jobin’s point out, while they find convergence in AI ethics themes, they observe that different AI ethics documents interpret and justify them differently. Therefore, we can expect that even if researchers converged on a set of unifying themes for AI ethics, there would not be convergence on concrete principles.

Think critically about dominant AI ethics themes

Even though there are no fully fleshed out, universal AI ethics principles or themes, it is clear that some broad themes are dominant. Accountability, autonomy, privacy, and others recur in AI ethics principles as well as in the studies that look for unifying themes. One might be tempted to think of them as helpful approximations of what the underlying, universal principles might be. If you think of the unifying themes in this way, it might make sense to choose a subset of those dominant themes and develop them into operationalizable principles. It is important to develop operationalizations of the existing themes and principles. At the same time, it is also important to think critically about them.

Studies show that present AI ethics principles have been written predominantly in the Global North (e.g., Fjeld’s and Jobin’s). Therefore, it will come as no surprise if dominant themes reflect Western value systems and conflict with other value systems.

For example, Mary Carmen and Benjamin Rosman argue that African values conflict with a prevalent AI ethics theme: Autonomy. As you might recall, autonomy is one of the (Western) bioethics-inspired principles that Luciano Floridi and Josh Cowls identify in AI ethics. According to this principle, individuals are entitled to make decisions for themselves. Carmen and Rosman argue that this principle conflicts with African communitarian values. The African communitarian values favor joint decision-making over individual decision-making, which autonomy principles promote. In the case of bioethics, as Carmen and Rosman point out, the principle of autonomy has been widely criticized for this reason.

Thilo Hagendorff argues that men dominate the authorship of AI ethics principles. Moreover, he argues that present AI ethics focuses on values that can be mathematically operationalized (such as accountability and safety). Building on the work of Carol Gilligan, he argues that “calculation-based” ethical approaches are a part of male-dominated justice ethics. According to this work, women tend to think of ethical problems differently, using a wider framework of empathetic, emotion-oriented ethics of care. Hagendorff attributes the prevalence of the calculation-based approach in AI ethics to the dominance of men.

Hagendorff suggests that the alternative is thinking of AI ethics using concepts such as care, welfare, and social responsibility and considering AI applications in the context of a larger network of social and ecological dependencies and relationships. He argues that reports by AI Now, an organization primarily led by women, take this approach.

Regardless of whether Hagendorff is right about the particular gender differences in ethical approaches, it is likely that the gender of the authors of AI ethics principles influences the content of the principles because one’s gender is relevant to one’s experience of power structures and therefore to one’s ethical views.

Given the lack of diversity in the perspectives involved in generating AI ethics principles, they seem to represent and promote the interests of a selected few, namely men in the Global North. Therefore, universally adopting dominant themes involves at least two risks. For one thing, populations that do not subscribe to the values encapsulated in the dominant themes might be reluctant to use AI systems conforming to principles derived from those themes. The potential outcome is unwittingly restricting the uptake of that system. More broadly, adopting current dominant themes universally runs the risk of subjugating broad populations to principles formulated by a small elite, thereby exacerbating existing power imbalances.

You might wonder whether the solution is to diversify the groups that author AI ethics principles. It is not. Diversifying the groups that author AI ethics principles is an excellent idea for many reasons, and there are already attempts to do so. For example, UNESCO’s AI ethics principles received endorsements from representatives of all of its member states, 193 countries in total. Having said that, it is important to acknowledge the bounds of such attempts.

Those who aggregate AI ethics principles into themes have values and priorities themselves, which shape the themes they come up with. We’re already used to thinking of individuals as influenced by their values and priorities, and the same is true for organizations who produce AI ethics principles and trends.

A study led by Yi Zeng shows a correlation between the content of AI ethics principles and the institutional affiliation of their authors: Governments mention privacy and security more than other types of institutions, but mention accountability less. Corporations mention transparency and collaboration more, but mention privacy and security less. Academia, non-profits, and non-government organizations mention humanity and accountability more, but mention fairness less. Zeng and his team hypothesize that the correlation is due to institutional interests. For example, governments may mention accountability less because it is a sensitive topic for them.

Formulating AI ethics principles responsibly

I propose that organizations should develop their own AI ethics principles, based on their own values. We already expect organizations to reflect on their own values when it comes to organizational codes of ethics, mission statements, etc. The same should be expected when it comes to AI ethics.

A key question is how to formulate AI ethics principles responsibly and how to tell that an organization has developed its principles responsibly. For example, when an investor, auditor, regulator, or consumer evaluates a startup that develops an AI system, how would they tell if the startup’s AI ethics principles are cherry picked, self-serving, partial, etc.?

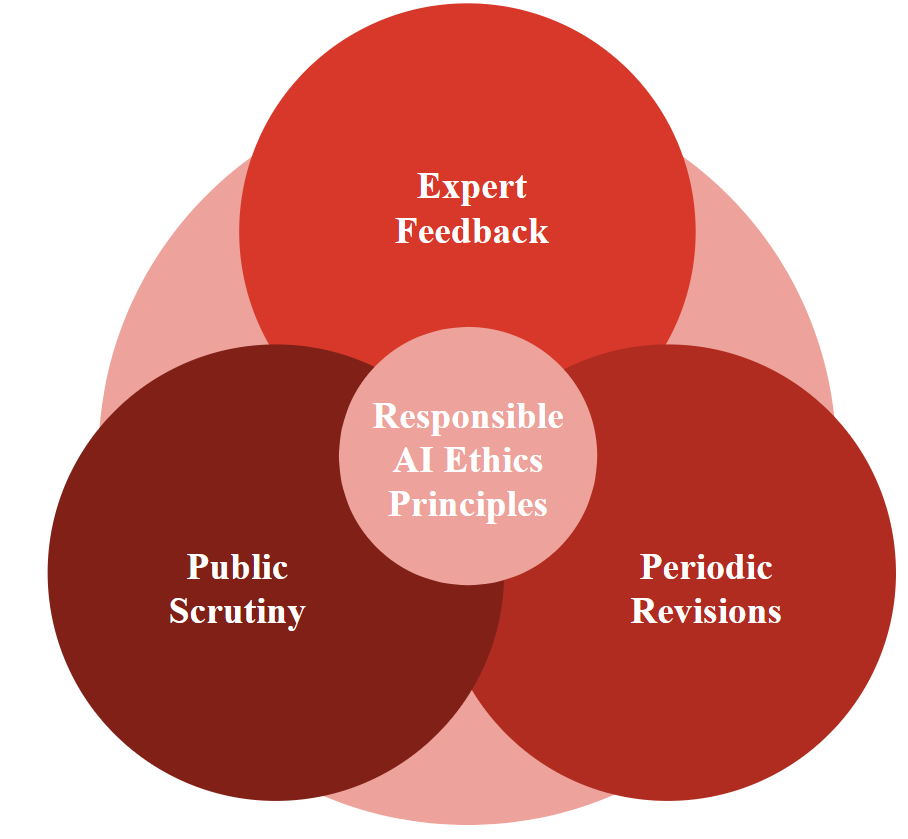

I suggest focusing on the process by which the organization formulates the principles, rather than just the principles themselves. The process should involve the following components:

- Incorporation of feedback from experts, especially experts in AI ethics and organizational behavior, and diverse stakeholders

- Publicizing the principles and the process behind their formation

- Periodic revisions of the principles, incorporating additional feedback from experts and stakeholders.

Feedback from experts and diverse stakeholders provides quality control, mitigating the risk of formulating irresponsible principles: Experts can help build on the work that has already been done, and feedback from stakeholders can help align the organization’s work with the interests and needs of those affected by it. Publicizing the principles and the process that led up to their formulation helps keep the organization accountable. Periodic revisions push the organization forward, gradually improving the principles as the technology develops and related risks and opportunities are better understood.

Focusing on the process gives organizations leeway in deciding their own values, while allowing evaluators to assess the quality of the principles indirectly. Investors, auditors, regulators, consumers, and others can check who was part of the formulation process and the extent to which their feedback was considered, how transparent the organization is about its principles and formulation process, and how effective revision procedures are.

In conclusion, AI ethics principles can be helpful as a basis for action. Organizations that develop AI systems must operationalize the principles and embed them into their workflows. Investors, auditors, and others must evaluate the efficacy of that operationalization. But the first question to ask is “which principles?”, and my answer is: Don’t settle for “universal” AI ethics principles. Whether you create AI systems or evaluate them, push for responsible development of AI ethics principles: incorporating feedback from experts and diverse stakeholders, publicizing the principles and how they were formulated, and periodically revising them.

An earlier version of this article was published by the Montreal AI Ethics Institute.

Author Bio

Ravit Dotan, PhD, is a domain expert in AI ethics, specializing in strategies for responsible development of AI and responsible investment in AI. She has a PhD in philosophy from UC Berkeley, and is currently a fellow at the world-leading center for Philosophy of Science at Pittsburgh University.