NeurIPS 2019, the latest incarnation of the Neural Information Processing Systems conference, wrapped up just over a week ago.

Multiple great blog posts have already summarized various talks and key trends, so the goal of this piece is more humble: to reflect on the experience of attending the conference, and in particular whether its vast size is harmful to its purpose as a research conference.

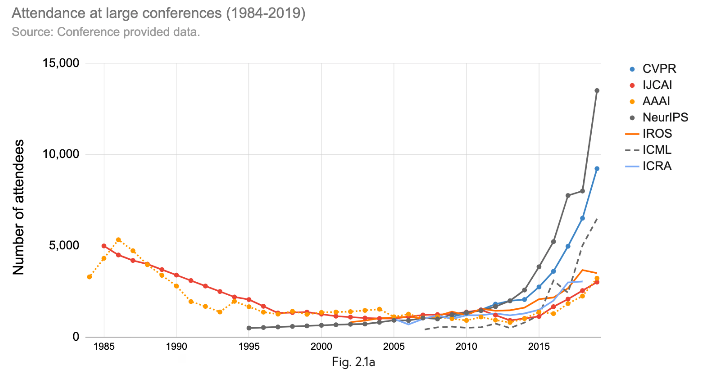

How vast is “vast”? Thirteen thousand attendees, 1,428 accepted papers, and 57 workshops vast. NeurIPS is a massive conference, as captured by attendees on Twitter:

A fun little demonstration of the scale of #NeurIPS2019 - video of people heading into keynote talk.

— Andrey Kurenkov 🤖 @ Neurips (@andrey_kurenkov) December 12, 2019

This is 9 minutes condensed down to 15 seconds, and this is not even close to all the attendees! pic.twitter.com/1VqAHZoqtj

Is that a Rolling Stones concert? No, that's a keynote at #NeurIPS2019 pic.twitter.com/nJjONGzJww

— Jevgenij Gamper (@brutforcimag) December 11, 2019

NeurIPS poster session- Too crowded.

— 柳原 尚史@Ridge-i (@narisan) December 12, 2019

I capture posters and am reading them at cafe outside :-) pic.twitter.com/uPI0i47Dd5

I work in the robotics sub-field of AI, so up to now I’ve mainly attended smaller robotics conference such as the Conference on Robot Learning (~400 people) and the Robotics: Science and Systems conference (~400 people). The largest conference I’ve attended before was ICRA (~3k people).

For a sense of scale, consider that both coffee breaks and poster sessions at the 2018 Robotics: Science and Systems conference took place in one room that was perhaps 1/50th of the size of the just the poster session room at NeurIPS, as shown in this video:

You get it, NeurIPS is massive. Is that a good thing or a bad thing? My take: both. Though it's not fun to take the middle ground position, I honestly did find both positive and negative aspects to attending the event, and I will present both here. I'm certainly not the first to point out that NeurIPS being so big may be doing more harm than good — see for instance The Greatest Trade Show North of Vegas (Pressing Lessons from NeurIPS 2018) — so I will say ahead of time I present no strong criticisms, only observations. And, I present these observations acknowledging that the NeurIPS organizing committee has likely put a lot more thought into the purpose and design of the conference than I have, and there are likely no easy solutions to any of the negatives I point out.

The Good

Lots of People

The core purpose of any research conference to bring together researchers interested in similar things, and allow these researchers to discuss their work and ideas. NeurIPS being so massive leads to it nicely accomplishing this purpose, since it naturally leads to lots of acquaintances, collaborators, and fellow researchers being around to talk to over coffee (or on public panels, over drinks, etc.).

The focus on inclusion, especially via the multiple workshops focused on it (Black in AI, Women in Machine Learning, LatinX in AI, Queer in AI, New In Machine Learning, CiML 2019: Machine Learning Competitions for All), further amplifies this positive quality. Seeing and hearing how many people had a good experience because of them was touching, and a credit to NeurIPS.

Fantastic and a productive day at WiML! It was a treat interacting with brilliant researchers across academic and industries!! And thanks @ylecun for an insightful round table discussion!! @WiMLworkshop @NeurIPSConf #NeurIPS #WiML2019 pic.twitter.com/15gEw6WpHv

— Gowthami (@gowthami_s) December 10, 2019

Learned a lot sharing a table with Yoshua Bengio, the Turing award winner at the @black_in_ai dinner. #NeurIPS 😊 pic.twitter.com/kp1Jvw6U6Q

— 𝙱𝚎𝚗𝚓𝚊𝚖𝚒𝚗 𝙰𝚔𝚎𝚛𝚊 (@BenjaminAkera) December 14, 2019

Thank you to all of the organizers of @black_in_ai at NeurIPS. It is truly an amazing, welcoming community doing immensely important work throughout the AI space. I appreciate all the hard work that went into this event!

— Matthew Kenney (@baykenney) December 12, 2019

Thanks @black_in_ai and everyone envolved in this incredible experience. I'm going back to Brazil feeling a lot of possibilities to envolve the most underprivileged in the discussion of how AI is being created, applied, integrated into society and discussed.

— Ramon Vilarino (@vilarinoramon) December 16, 2019

Lots of Posters/Talks/Topics

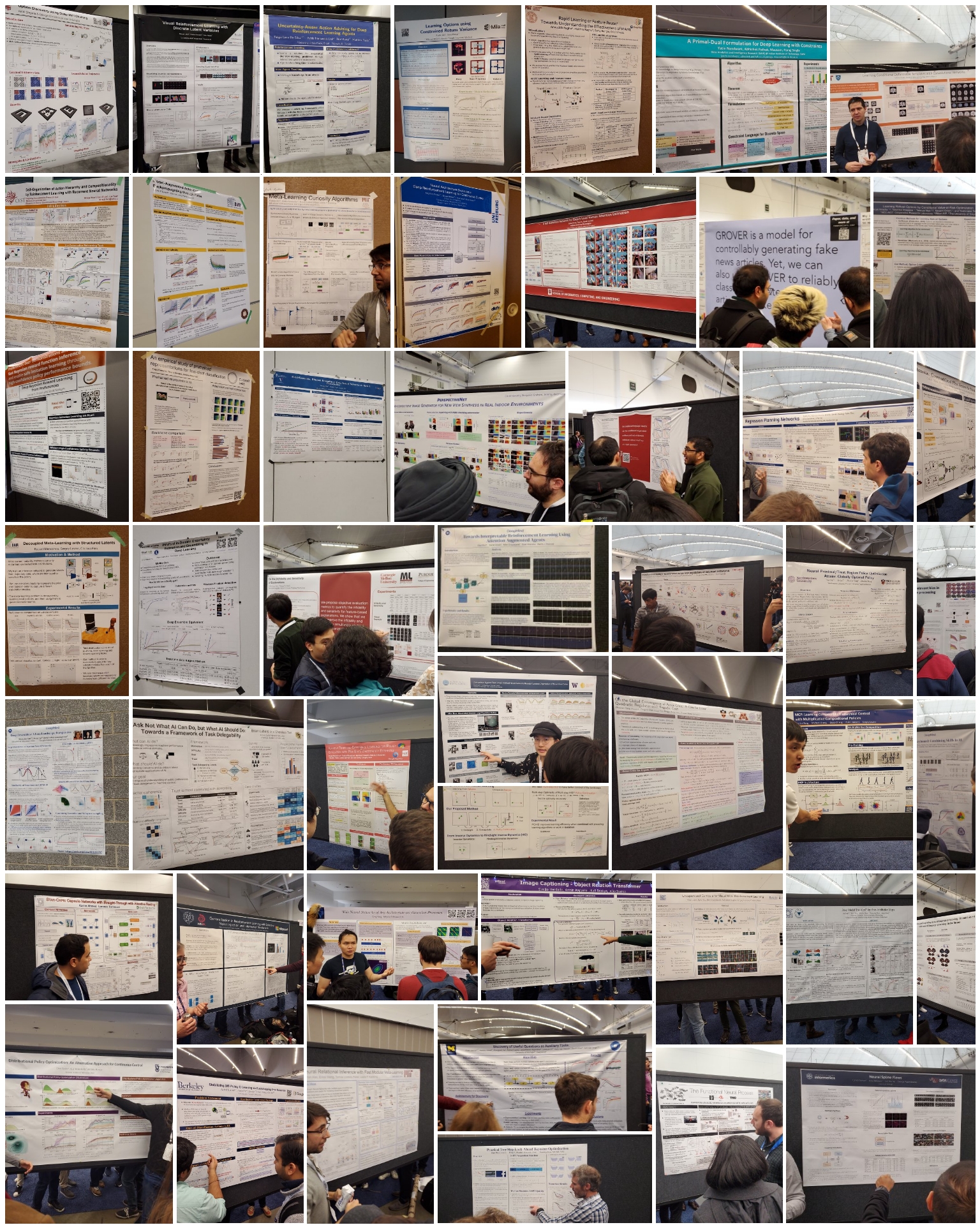

The other primary purpose of any research conference is to inform attendees of new research and inspire new ideas. Here too, the size of NeurIPS is a benefit: much moreso than any previous conference I’ve been too, there was just a ton of research being highlighted from many different sub-fields. While such scale and variety could lead to finding less novel research to dig into rather than more, for me it did the opposite — whereas at many robotics conferences I have already seen many of the papers on arxiv or would have likely come across them easily had I explored their topic, the papers I was most excited to discover at NeurIPS were not focused on robotics at all but were rather from CV or NLP. I would have likely never come across many of these papers if not for the conference, and not had some ideas that came to me after seeing them.

The number of workshops and events also meant that some were more out there, such as the Reproducibility Challenge, the Workshop on Machine Learning for Creativity and Design, the Communication Skills Practicum, and the Retrospectives workshop. These were definitely among my favorite parts of the event, and it was nice to see people from outside the research community that interact with AI (such as journalists and artists) being given a platform to talk directly with us researchers.

Lots of Fun

Let’s be honest, part of why grad students like me are excited to attend conferences is the travel and socializing with friends parts of them. A big conference means lots of sponsors which means industry parties, which means fun! As conveyed in “An AI conference once known for blowout parties is finally growing up”, the industry parties were relatively tamer that they’ve been purported to be in the past, but attending them in the evenings was very much part of the experience.

Though I did not actually attend many of these events, I still enjoyed discovering downtown Vancouver and going to restaraunts with new friends and acquaintances. And this iteration of the conference having evening social events as a new feature felt like a welcome development, since it presented an alternative option to those who are not inclined to attend loud parties or just did not get invited to the industry events.

The Bad

Lots of People

Part of the reason I have so many photos of posters is that it was often simply not feasible to talk to people presenting the ones I was most interested in, so instead I kept walking around and looking at other ones. Having in-depth conversations at posters is definitely one of my favorite parts of conferences, and it was definitely something I was less able to do at NeurIPS than in past conferences. And though it is true that having so many people enables many conversations, it also hinders them — at this scale it is likely to just never meet people you know and that are also attending the conference if you don't making explicit plans to do so, which means some conversations that would have otherwise happened at coffee breaks never occur.

And, though efforts at inclusion deserve praise, they also mean the organizers have all the more responsibility to ensure those who are invited to attend can actually do so. It was therefore unfortunate to hear a few months ago that many people were getting their visa applications for going to NeurIPS rejected:

Canada is denying travel visas to AI researchers headed to NeurIPS — again https://t.co/O1zMBMxhzJ via @VentureBeat

— Timnit Gebru (@timnitGebru) November 10, 2019

NeurIPS 2017, LA, USA: Applied 2 months before the conference -> got the visa 1 month *after* the conference. :(

— Chaitanya Joshi (@chaitjo) December 5, 2019

NeurIPS 2019, Vancouver, Canada: Got the visa 3 weeks before. Bookings for the trip were stressful but I'm glad I can make it.

(Both times for workshops)

To the credit of the AI community, the NeurIPS organizers, and the Canadian government, this problem seemed to have for the most part been addressed in the lead up to the conference. But, it’d still be ideal if it did not happen in the first place.

2019 NeurIPS was last week in Vancouver. There were no major visa debacles. One capable government agency (@ISED_CA) worked with another (@CanBorder). Problem solved(ish). We get upset on Twitter about problems but we don’t celebrate quiet solutions. /3https://t.co/SFhoUbX4SD

— Graeme Moffat (@graemedmoffat) December 17, 2019

The hard part is that many visa exclusion continues for many. Let’s make sure this is not a one-off fix and continue to work towards an inclusive world. ❤️#SautiYetu #Masakhane

— Tẹjúmádé Àfọ̀njá (@tejuafonja) November 27, 2019

Lots of of Posters/Talks/Topics

Even with a fairly simple schedule (each of the non-workshop days involved two 2 hour poster sessions in one huge room, two long talks in another giant room, and two periods with multiple short talks being given in a few slightly smaller but still huge rooms), the amount of stuff at the conference meant that just figuring out what to try and focus on was a challenge. This is in contrast to for example CoRL and RSS (the smaller robotics conferences I previously mentioned), which have a single large room for all the talks and also poster sessions that make it easy to take it all in.

Each workshop was smaller in scale than the entire conference, though some still occupied giant rooms and had dozens of posters and hundreds of attendees. Thos4 both helped and hurt, in that each workshop took an entire day and so it was easy to choose to stick with one and dive deep into a single topic, but in practice it was hard to choose to stick with just one and so I ended up running between a few a good deal.

Lastly, with more submitted papers there comes more need for reviews and more variance in review quality. Though this is larger issue than just NeurIPS, it should be noted that some really ethically dubious papers made it past the review process to be presented at the conference:

.@NeurIPSConf'19 scariest paper award goes to:

— 𝙳𝚊𝚟𝚒𝚍 𝙳𝚊𝚘 (@dwddao) December 17, 2019

'Face Reconstruction from Voice using Generative Adversarial Networks' (Surveillance) and 'Predicting the Politics of an Image Using Webly Supervised Data' (Discrimination). Congrats also to NeurIPS for missing ethical guidelines.🥇

I do not claim to be an authority on what's ethical or not, but multiple people at the conference itself mentioned these specific papers as having clear ethical flaws (of course, for the sake of improving the field, and not just calling out the authors).

Lots of Fun

And yes, the parties were not exclusively a positive thing. As covered well by Zachary Lipton already in The Greatest Trade Show North of Vegas (Pressing Lessons from NeurIPS 2018), the very nature of industry presence distracts from the research aims of the conference, and on top of that the parties having invites is a hindrance to inclusivity:

“The expo hall takes too much attention away from the academic events. The room is full of free knickknacks, free food, posh seating, and millions of dollars pumped into flashy attractions. Scientific talks and posters should not have to compete with a CES-style expo hall for attention.

...

The current party culture brings together the socially-connected (regardless of discipline), the recruitable (hot PhD candidates and junior researchers) and established well-known researchers. But excluded are those young researchers who would most benefit most from the opportunity to get to meet potential employers, advisors, and mentors.”

I will say that the expo hall had relatively few knickknacks or attractions that kept me away from the poster session, and I do think it was useful for those looking to interview for internships or full time roles. But, it was also located right next to the poster session, and so those who needed to talk to recruiters for these opportunities likely did miss out on looking at posters or talks.

So, What?

So there you have it, being big is good and being big is bad, for overlapping reasons. Naturally, there is no simple fix to keep the good aspects while removing the bad. Still, let me throw out a few ideas:

-

Limit attendance to mostly people with papers to present at the conference. With 1.5k papers accepted, and perhaps double that presented as a whole when including workshops, that still means that a huge number of attendees must have not had a paper to present. Though it's a shame to exclude people who are interested in attending, at the end of the day this is an academic conference and the most important thing is that academics in attendance benefit from it as such. Granted, I may be completely wrong here and most of the people were indeed ones who at least submitted papers, but still...

-

Revise the reviewing process. How to maintain review quality in the face of growing numbers of submissions is a field-wide problem, and as discussed by the NeurIPS team in a great medium post there are no easy answers for this. Still, here is a more out there idea that came about during discussions with others at the excellent Retrospectives workshop: allow year-round submission, and have a cap on the total number of accepted papers. That is, allow people to submit year-round or for most of the year, and do reviews on a rolling basis until a certain number of accepted papers has been reached. Hopefully, reviewing burden will be more spread out over the year due to there being an incentive to submit earlier, since the earlier you are to submit the more likely you are to get assigned reviewers who don't have a bunch of other things to review and can do a better more careful job as a result.

Another idea may be to move to a two-stage review process, with the first stage requiring the submission of a short abstract and method description to allow reviewers to evaluate overall novelty and flag ethics issues, and the second stage (for just those papers that made it past the first stage) requiring the submission of the finished papers with results as usual. Lastly and most easily, at least having a code of ethics and a way for reviewers to flag papers for potential further review based on ethical concerns might be a good idea.

-

Make better tools. With so much going on, being able to organize it all in one’s head and prioritize what to focus on naturally involves using software tools. The Whova app that we got had a nice agenda that was searchable by keywords, but it was only usable on smartphones and had a very text-heavy non-visual UI. Other large conferences such as SIGRAPH or CHI do seem to have better UI tools to navigate their agendas, so it'd be nice if NeurIPS could invest in this a bit more.

-

Spread out poster sessions more. Though it was nice to have all the topics side by side in one huge room (since it encouraged people to see things outside of their sub-field), ultimately it did more harm than good by increasing congestion. Since there is already a clear delineation of topics (NLP, CV, theory, RL, etc.), it’d make sense to consider having poster sessions in multiple rooms by topic rather than just in just one giant room.

Lastly, let me end on a note of positivity. Though there are drawbacks to being so big, my overall feeling about NeurIPS 2019 — my first academic event of this scale — was of being impressed with how smoothly the whole thing ran and of loving the variety of work it showcased and multiple interesting workshops I got to attend and talks I got to hear because of it. As long as any future growth is mindfully managed to encourage more of the positives and less of the negatives associated with scale , I am excited for NeurIPS to continue being a massive event that I might attend in the future.

And oh so quickly, #NeurIPS2019 (my first Neurips) is over.

— Andrey Kurenkov 🤖 (@andrey_kurenkov) December 15, 2019

As I was sitting by the shore yesterday appreciating the view, got to reflecting on how orderly and functional this whole huge event was, and how cool workshops were.

Bravo to all organizers, speakers, and venue staff! https://t.co/MorjimZYap pic.twitter.com/2kvU2O3bAO

PS feel free to take a look at my favorite talks from the event:

Author Bio

Andrey is a grad student at Stanford that likes do research about AI and robotics, write code, appreciate art, and ponder about life. Currently, he is a PhD student with the Stanford Vision and Learning Lab working at the intersection of robotics and computer vision advised by Silvio Savarese. He is also the creator of Skynet Today, and one of the editors of The Gradient. You can find more stuff by him at his site, and follow him on Twitter.

Citation

For attribution in academic contexts or books, please cite this work as

Andrey Kurenkov, "Is NeurIPS Getting Too Big?", The Gradient, 2019.

BibTeX citation:

@article{kurenkov2019neuripst,

author = {Kurenkov, Andrey},

title = {Is NeurIPS Getting Too Big?},

journal = {The Gradient},

year = {2019},

howpublished = {\url{https://thegradient.pub/neurips-2019-too-big/ } },

}

If you enjoyed this piece and want to hear more, subscribe to the Gradient and follow us on Twitter.